Home > GCL TechTalk Series > 2014/07/24 Global Design Seminar: Text Normalization for Text-to-Speech Synthesis

GCL TechTalk Series

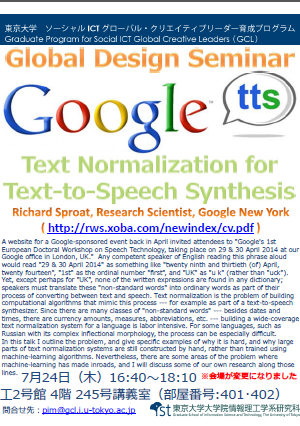

2014/07/24 Global Design Seminar: Text Normalization for Text-to-Speech Synthesis

Date: Thursday, July 24, 2014

Time: 16:40-18:10

Venue:Seminar Room #245 (RM#401-402) , Faculty of Engineering Bldg 2 , Hongo Campus

The venue has been changed as the above.

Speaker: Richard Sproat, Research Scientist, Google New York

Abstract:

A website for a Google-sponsored event back in April invited attendees to

“Google’s 1st European Doctoral Workshop on Speech Technology, taking place

on 29 & 30 April 2014 at our Google office in London, UK.” Any competent

speaker of English reading this phrase aloud would read “29 & 30 April 2014”

as something like “twenty ninth and thirtieth (of) April, twenty fourteen”,

“1st” as the ordinal number “first”, and “UK” as “u k” (rather than “uck”).

Yet, except perhaps for “UK”, none of the written expressions are found in

any dictionary; speakers must translate these “non-standard words” into

ordinary words as part of their process of converting between text and

speech. Text normalization is the problem of building computational

algorithms that mimic this process — for example as part of a

text-to-speech synthesizer. Since there are many classes of “non-standard

words” — besides dates and times, there are currency amounts, measures,

abbreviations, etc. — building a wide-coverage text normalization system

for a language is labor intensive. For some languages, such as Russian with

its complex inflectional morphology, the process can be especially

difficult.

In this talk I outline the problem, and give specific examples of why it is

hard, and why large parts of text normalization systems are still

constructed by hand, rather than trained using machine-learning algorithms.

Nevertheless, there are some areas of the problem where machine-learning has

made inroads, and I will discuss some of our own research along those lines.

Richard Sproat, Research Scientist, Google New York

Richard Sproat received his Ph.D. in Linguistics from the Massachusetts

Institute of Technology in 1985. He has worked at AT&T Bell Labs, at

Lucent’s Bell Labs and at AT&T Labs — Research, before joining the faculty

of the University of Illinois. From there he moved to the Center for Spoken

Language Understanding at the Oregon Health & Science University. In the

Fall of 2012 he moved to Google, New York as a Research Scientist.